- Nearly 3 in 4 Americans (73%) have used ChatGPT for health-related purposes in the past year.

- More than half of Americans (55%) said ChatGPT helped them understand a diagnosis they didn’t fully grasp from a doctor’s appointment, and 1 in 4 are comfortable sharing sensitive health data with it.

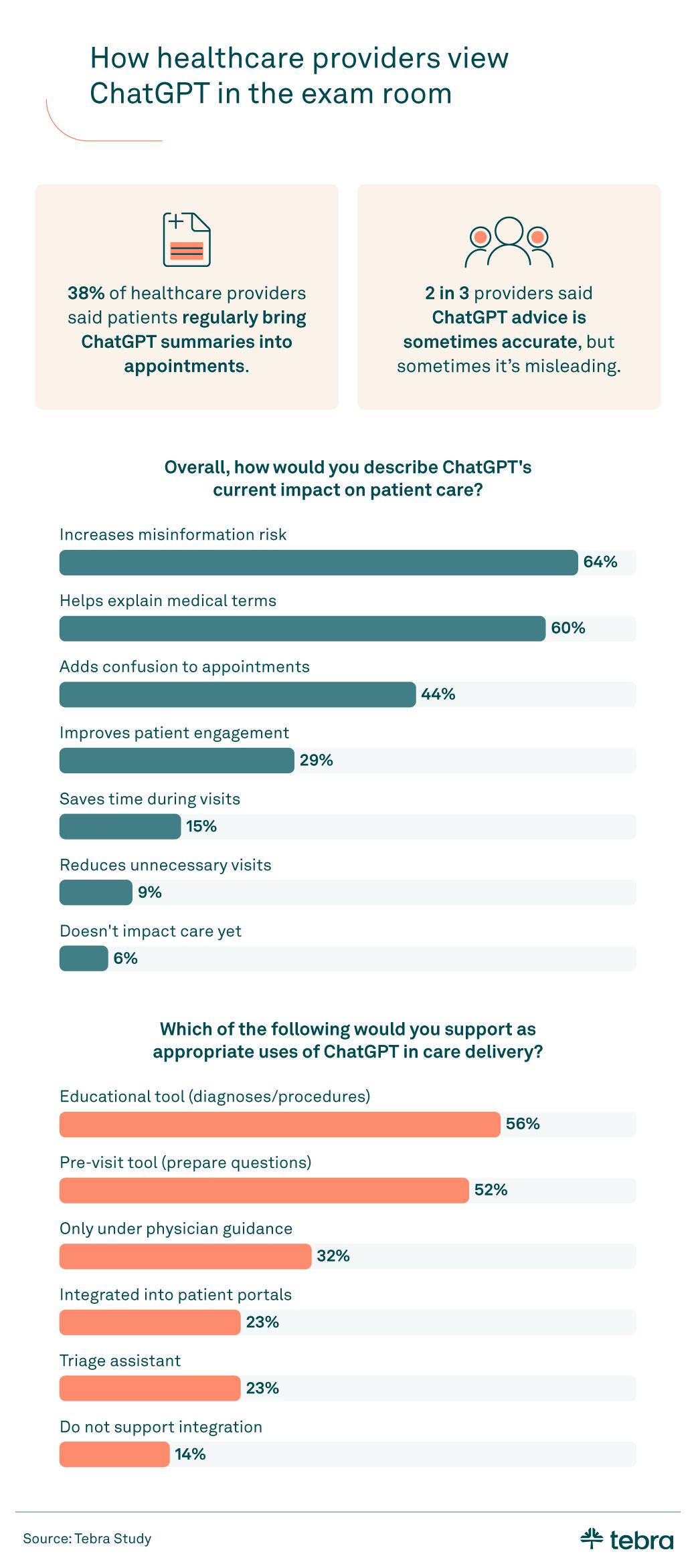

- 38% of healthcare providers said patients regularly bring ChatGPT-generated summaries into appointments.

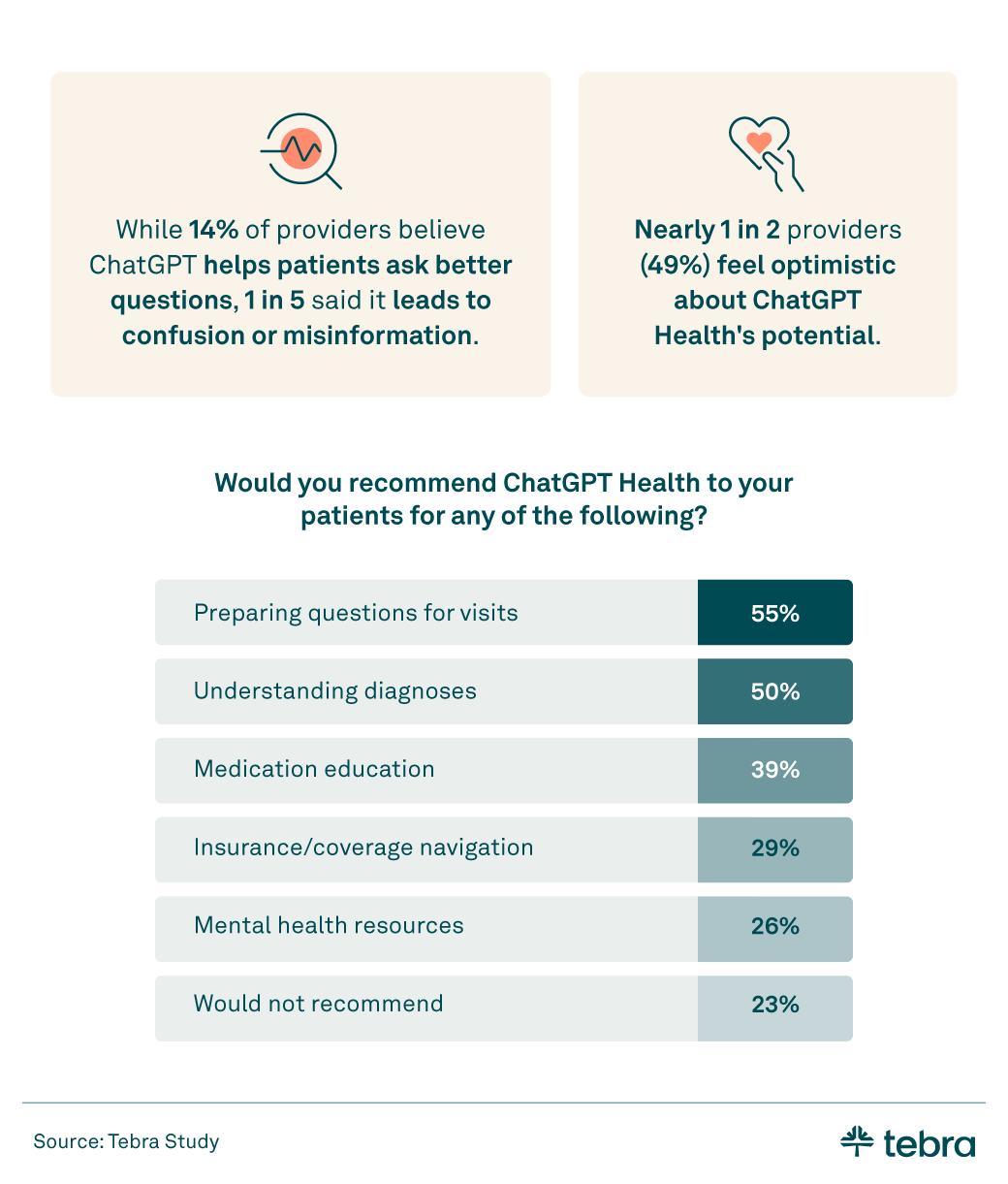

- Nearly 1 in 2 providers (49%) feel optimistic about ChatGPT Health’s potential.

Artificial intelligence is no longer a future concept in healthcare. Patients are now walking into appointments with AI-generated summaries, second opinions, and treatment questions already in hand.

To understand what this shift means for independent and small-group private practices, Tebra surveyed 803 Americans and 211 healthcare providers in February 2026 to explore how ChatGPT is influencing care decisions on both sides of the exam room.

ChatGPT and patient care

Patients are turning to AI tools (CDC.gov & Generative AI) before, during, and even after appointments, reshaping digital intake, visit preparation, and follow-up conversations. This shift is changing how people prepare for visits, process medical information, and make care decisions.

- 73% of Americans have used ChatGPT for health-related purposes (for example, to interpret health information from national publish health sites such as the CDC) in the past year, and more than 1 in 5 (21%) have brought ChatGPT-generated advice to an appointment to discuss with their doctor.

- More than half (55%) said ChatGPT helped them understand a diagnosis they didn't fully grasp from a doctor's appointment.

- 41% have sought a second opinion based on ChatGPT's health advice.

These new behaviors directly affect digital intake, care coordination, and follow-up workflows in independent practices, especially where staff are already stretched thin.

Trust in ChatGPT among patients

- The majority of patients (68%) have some level of trust in ChatGPT for health advice:

- Completely trust: 2%

- Mostly trust: 21%

- Somewhat trust: 45%

- Rarely/Do not trust: 32%

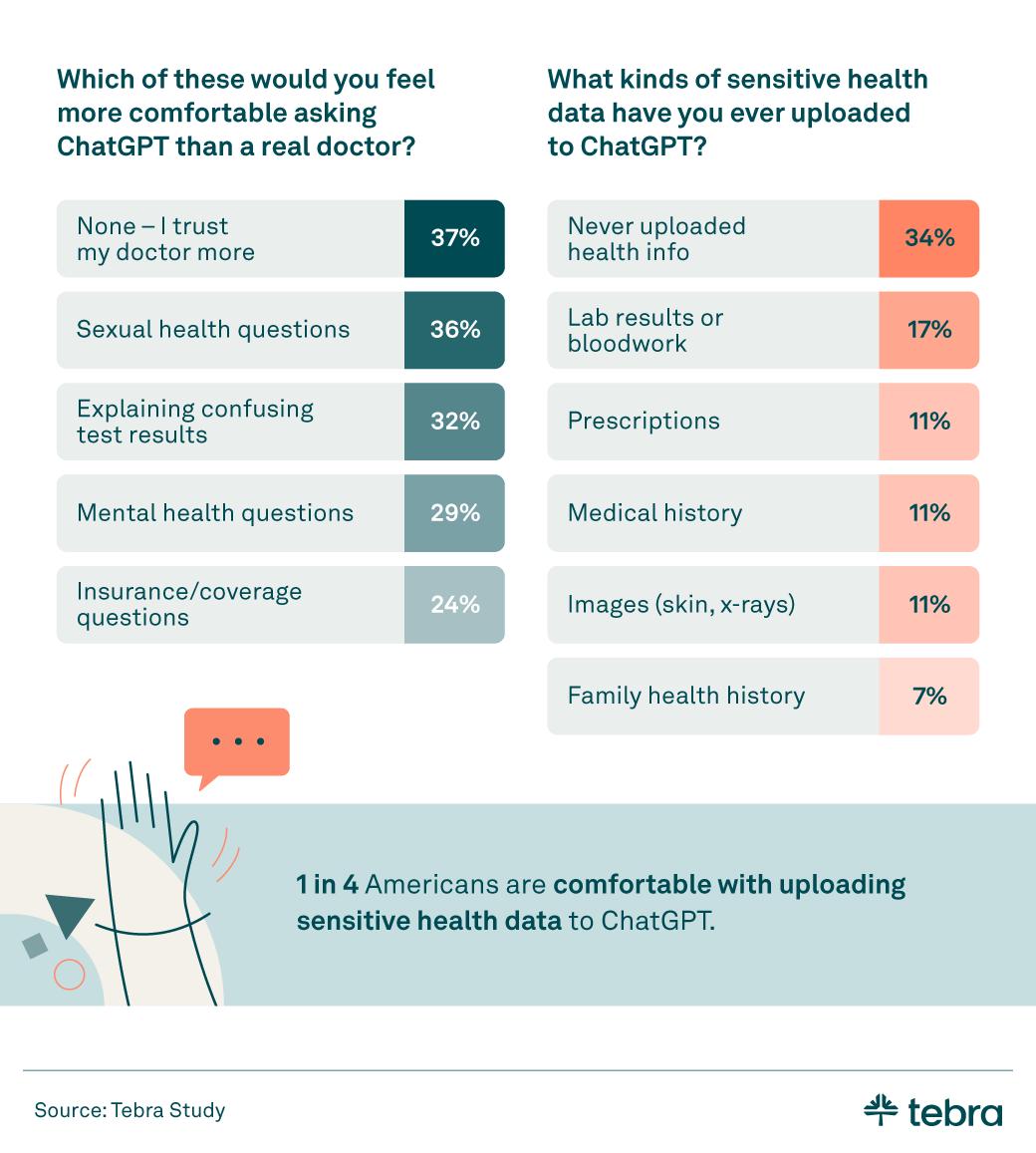

- 1 in 4 are comfortable sharing sensitive health data with ChatGPT, most often providing lab results or bloodwork (17%).

- 20% have followed ChatGPT's health advice instead of their doctor's recommendations. Gen X (23%) and Gen Z (21%) patients were the most likely to do so. This aligns with emerging medical guidance that positions AI as a tool to augment, not replace, clinical judgment (American Medical Association).

- However, 74% of Americans still trust their doctor's advice more than ChatGPT's advice.

For independent practices, this trust gap is an opportunity to guide how AI is used, reinforcing the clinician’s role while offering patients safe, curated digital tools.

Thoughts on the new ChatGPT Health

ChatGPT Health is an emerging version of ChatGPT designed to support health-related questions, education, and care navigation (Congressional Research Service overview of AI in health care). It aims to provide more structured, healthcare-focused guidance for tasks like understanding diagnoses, preparing questions for appointments, and reviewing treatment information.

As interest grows, private practices may increasingly encounter patients who are using it as part of their care experience.

- 62% of Americans are at least somewhat interested in ChatGPT Health, and 44% would consider using it as part of their care.

- Interest in ChatGPT Health by generation:

- Gen Z: 51%

- Millennials: 65%

- Gen X: 66%

- Baby Boomers: 68%

As these tools evolve, practices will need clear policies on when and how clinicians and staff can use AI so they can realize the benefits without introducing new risks, in line with national efforts to govern health AI safely (HHS Artificial Intelligence Strategy & Implementation).

What AI-informed patients mean for providers

Providers are weighing both the benefits and risks (rapid review of generative AI in health communication and public health) as they respond in real time to AI-informed patients, including implications for staffing capacity, front-desk workflows, and clinical documentation in the EHR. Their perspectives reveal where ChatGPT may support care, where it may create friction, and how private practices can prepare for what comes next.

- Over 1 in 3 providers (37%) said patients have made major health decisions based on ChatGPT advice before consulting them.

- 38% of healthcare providers said patients regularly bring ChatGPT-generated summaries into appointments.

- Nearly 1 in 2 providers (49%) feel optimistic about ChatGPT Health's potential.

Preparing your practice for AI-informed patients

AI is now part of the patient journey, whether providers invite it in or not. For private practices, the opportunity lies in setting clear expectations and leading the conversation. Consider:

- Embedding a standard question about AI use into digital intake or pre-visit forms

- Addressing misinformation with empathy while documenting key AI-related questions in the EHR

- Offering a short list of trusted digital resources (for example, national public health sites such as CDC.gov) (including your own patient education and any vetted AI tools)

When these steps are built into existing intake, messaging, and documentation workflows, practices can manage AI-related conversations without adding more manual work for staff.

These actions can help maintain clinical authority while strengthening patient-provider trust. When practices have a structured approach to AI-informed discussions, providers can reduce confusion, protect patient safety (AMA Principles for Augmented Intelligence), and position themselves as expert guides.

Methodology

Tebra conducted two surveys to explore how Americans are using ChatGPT for health-related purposes and how healthcare providers are responding to this shift in patient behavior.

Patient survey: Tebra surveyed 803 Americans about their use of ChatGPT for health-related tasks. The generational breakdown was Gen Z (21%), Millennials (49%), Gen X (22%), and Baby Boomers (8%).

Provider survey: Tebra surveyed 211 healthcare providers about their experiences with patients bringing ChatGPT-generated health information into appointments, their assessment of AI accuracy, and their views on integrating ChatGPT into clinical workflows.

Data was collected in February 2026.

About Tebra

Tebra, headquartered in Southern California, empowers independent healthcare practices with cutting-edge AI and automation to drive growth, streamline care, and boost efficiency. Our all-in-one EHR and billing platform delivers everything you need to attract and engage your patients, including online scheduling, reputation management, and digital communications.

Inspired by "vertebrae," our name embodies our mission to be the backbone of healthcare success. With over 165,000 providers and 190 million patient records, Tebra is redefining healthcare through innovation and a commitment to customer success. We're not just optimizing operations — we're ensuring private practices thrive.

Fair use statement

The information in this article may be used for noncommercial purposes only. If shared, proper attribution with a link back to Tebra must be included.